Find out how HackerEarth can boost your tech recruiting

Learn more

[Repost] The very brief history of Computer Science

I believe it is fundamental to have an overview of the history that later formed Computer Science. People know work of individuals such as Dijkstra. But there are so many others who’ve made valuable contributions to make computers we have today happen. It was a process that started in the early 800s and started to grow in the 1800s and the 1900s.

How did simple changes in voltage lead to the development of machines such as computers? Perhaps, we can attribute it to some incredible work by individuals from across different centuries.

Let’s look at a few concepts these “greats” came up with during this amazing journey.

300 BC

In 300 BC Euclid wrote a series of 13 books called Elements. The definitions, theorems, and proofs covered in the books became a model for formal reasoning. Elements was instrumental in the development of logic and modern science. It is the first documented work in mathematics that used a series of numbered chunks to break down the solution to a problem.

300 BC — 800 AC

Following Euclid, notes about recipes, etc. were the closest thing to algorithms today. Also, there were inventions of mathematical methods. Sieve of Eratosthenes, Euclid’s algorithms, and methods for factorization square roots are a few examples.

It seems common to us now to list things in the order in which we we will do them. But in mathematics, at that time ,— it was not usual.

800s

The story really starts in the 800s with Al-Khwārizmī. His name roughly translates to Algoritmi in Latin, and he developed a technique called Algorism. Algorism is the technique of performing arithmetic with Hindu-Arabic numerals.

Al-Khwārizmī is the first one to develop the concept of the algorithm in mathematics.

1300s

Then came Ramon Llull, who is considered the pioneer of computation theory. He started working on a new system of logic in his work — Ars magna, which influenced the development of a couple of subfields within mathematics, specifically,

- The idea of a formal language

- The idea of a logical rule

- The computation of combinations

- The use of binary and ternary relations

- The use of symbols for variables

- The idea of substation for a variable

- The use of a machine for logic

All these led him to pioneer the idea of representing and manipulating knowledge using symbols and logic.

1600s

Then came Blaise Pascal. Pascal was a French mathematician who, at an early age, was a pioneer in the field of calculating machines. Pascal invented the mechanical calculator, and Pascal’s calculator after about 10 years, in 1642. This was an important milestone in mathematics and engineering. It showed the general public that tools can be built, using mathematics, to simplify things, for example, accounting. (Fun fact: He built the first prototype for his father — an accountant!)

Following Llull’s and Pascal’s work, there was Gottfried Leibniz. He made incredible achievements in symbolic logic and made developments in first-order logic. These were important for the development of theoretical computer science. His work still remains:

- Leibniz’s law (in first-order logic)

- Leibniz’s Rule (in mathematics)

Leibniz also discovered the modern binary system that we use today . He wrote about it in his paper “Explication de l’Arithmétique Binaire.”

1800s

Charles Babbage, the father of computing, pioneered the concept of a programmable computer. Babbage designed the first mechanical computer. Its architecture was like the that of the modern computer — he called his mechanical computer the Analytical Engine. The Analytical Engine was a general-purpose computer (by today’s standards). It was the first design that we, now, call Turing complete. It incorporated an Arithmetic and Logic unit (ALU), integrated memory, and control flow statements. He designed it, but it wasn’t until 1940s that it was actually built. When he designed it, it was programmed by punch-cards, and the numeral system was decimal.

Working with Charles Baggage on his Analytical Machine was Ada Lovelace. She was the first computer programmer. In her notes on the engine, she wrote the first algorithm that could be run on any machine. The algorithm was to compute Bernoulli numbers. Most of her work contributed to creating the subfield of Scientific Computing. (Fun fact: Her name inspired the programming language Ada!)

Then came a man most computer scientists should know about — George Boole. He was the pioneer of what we call Boolean Logic, which as we know is the basis of the modern digital computer. Boolean Logic is also the basis for digital logic and digital electronics. Most logic within electronics now stands on top of his work. Boole also utilized the binary system in his work on the algebraic system of logic. This work also inspired Claude Shannon to use the binary system in his work. (Fun fact: The boolean data-type is named after him!)

Gottlob Frege defined Frege’s propositional calculus (first-order logic), and predicate calculus. These were vital in the development of theoretical computer science. (Fun fact: The Frege programming language is named after him!)

1900s

In the early 1900s, Bertrand Russell invented type theory to avoid paradoxes in different kinds of formal logic. He proposed this theory when he discovered that Gottlob Frege’s version of naive set theory was in conflict with Russell’s paradox. Russell proposed a solution that avoids Russell’s paradox by first creating a hierarchy of types, then assigning each mathematical entity to a type.

After Russell came the amazing Alonzo Church who introduced Lambda calculus to the world. Lambda calculus introduced a new way of viewing problems in mathematics, and inspired a large number of programming languages. Lambda calculus played a big part in the development of functional programming languages. (Why it is important, and here).

Claude Shannon is famous for his work, the Shannon-Fano algorithm. Shannon founded information theory. He focused on efficiently and reliably transmitting and storing information. At MIT, he wrote a paper that demonstrated the powerful applications of boolean algebra and its electrical applications.

Everyone knows Alan Turing. He formalized a lot of concepts in theoretical Computer Science with his work on the Turing Machine. Turing also contributed heavily to Artificial Intelligence theory — a notable example being the Turing Test. Apart from his research, he was a codebreaker at Bletchley Park during WWII, and was deeply interested in mathematical biology theory. (His work and his papers are deeply insightful — take a look please.)

Grace Hopper was first exposed to Computer Science when she was assigned a role to work on the first large-scale digital computer at Harvard. Her task was to design and implement a method to use computers to calculate the position of ships. In the early 1950s, she designed the language COBOL and built the first program that interprets English code to binary code. Her vision played an incredible part in the development of Computer Science, and she foresaw a lot of trends in computing. (Fun fact: She is credited with popularizing the term debugging!)

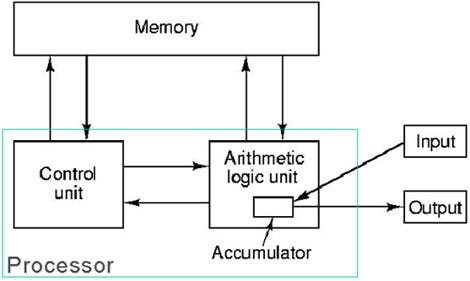

Famous physicist John von Neumann was part of the Manhattan Project. But, he’s also important in the history of Computer Science. He designed the Von Neumann architecture. Basically, if you have not heard of it — I am quite certain you have seen the model he came up with:

One of his biggest principles was the idea that data and program can be saved in the same space. This idea implies that a machine can alter both its program and its internal data.

He contributed to pretty much everything that has any relation to mathematics.

John Backus was the inventor of the FORTRAN language with his team at IBM. Backus many important contributions to functional programming languages and systems. Also, he served on committees developing the ALGOL system of programming languages. Alongside Peter Naur he also developed the Backus-Naur Form. The Backus-Naur Form is used to describe and depict programming languages.

Interestingly, Backus wrote an article titled “Can Programming Be Liberated from the von Neumann Style? A Functional Style and Its Algebra of Programs,” which is worth reading!

Alongside Backus, Peter Naur made major contributions to the definition ALGOL 60 and pioneered the Backus-Naur Form.

Noam Chomsky, a brilliant linguist, has made some indirect and direct contributions to Computer Science. His work on Chomsky Hierarchy and the Chomsky Normal Form are well known in the study of formal grammars.

Edsger Dijkstra is someone we all learn about in our algorithms class. In 1956, he was given the task of showing the power of the ARMAC computer. He came up with the shortest-path algorithm (Dijkstra’s algorithm). Next, ARMAC engineers wanted to cut the copper wire costs. To solve that task, Dijkstra applied a version of the shortest sub-spanning tree algorithm (Prim’s algorithm).

That’s the kind of man Dijkstra was! Dijkstra also formulated the Dining philosophers problem. The xkcd relates to the paper Dijkstra wrote on how Go-to statements are considered harmful.

Donald Knuth is a pioneer in the field of analysis of algorithms. He worked on developing both the aspect of finding the computational complexity of algorithms and also the mathematical techniques for performing analysis on algorithms. He’s also widely known for creating the TeX typesetting system, for the book The Art of Computer Programming, for the KMP (Knuth–Morris–Pratt) algorithm, and much much more.

Leslie Lamport laid out the foundations for the field of distributed systems. (Fun fact: he won the Turing Award in 2013. He also lives in New York — so meet him if you get a chance. )

Vint Cerf and Bob Kahn are known as the fathers of the Internet. They designed the TCP/IP protocols, and the architecture of how the Internet would be laid out.

Following their progress, in 1989/1990, Tim Berners-Lee invented the worldwide web. Along with his colleague Robert Cailliau, he sent the first HTTP communication between a server and a client. An important milestone in Computer Science — it represented a new era of communication and also demonstrated the practical nature of theories in Computer Science.

Let me know if I have gotten anything incorrect or have not made anything clear! Also, I wasn’t able to include every individual along the way, but let me know if I’ve missed anyone obvious. Please expand on things I have written through comments.

I also did not go into much detail with every individual. In the future, I am also going to be doing individual posts about each of these individuals. The posts will have more details about their accomplishments.

Resources to learn next:

Key things to research after:

First-order logic, Jacquard loom, Analytical Engine, Boolean algebra, and much more!

- First-order logic: http://cs.nyu.edu/davise/guide.html,http://cs.nyu.edu/faculty/davise/ai/pred-examples.html

- Analytical, Difference Engines:http://en.wikipedia.org/wiki/Analytical_Engine#Comparison_to_other_early_computers

- Type Theory:

https://www.princeton.edu/~achaney/tmve/wiki100k/docs/Type_theory.html - The Chomsky Hierarchy:http://athena.ecs.csus.edu/~gordonvs/135/resources/ChomskyPresentation.pdf

One of my favorite talks have been by Donald Knuth. His talk is titled “Let’s Not Dumb Down the History of Computer Science,” is just amazing.

Couple of books:

— Out of their Minds: The Lives and Discoveries of 15 Great Computer Scientists, Dennis Shasha and Cathy Lazere

— Selected Papers on Computer Science, Donald Knuth

Thanks to:

Thanks for reading! If you’d like to checkout some of my other projects — visit my portfolio!

The author of this blog is Abhi Agarwal. Follow him on Twitter – https://twitter.com/abhiagarwal

Get advanced recruiting insights delivered every month

Related reads

The complete guide to hiring a Full-Stack Developer using HackerEarth Assessments

Fullstack development roles became prominent around the early to mid-2010s. This emergence was largely driven by several factors, including the rapid evolution of…

Best Interview Questions For Assessing Tech Culture Fit in 2024

Finding the right talent goes beyond technical skills and experience. Culture fit plays a crucial role in building successful teams and fostering long-term…

Best Hiring Platforms in 2024: Guide for All Recruiters

Looking to onboard a recruiting platform for your hiring needs/ This in-depth guide will teach you how to compare and evaluate hiring platforms…

Best Assessment Software in 2024 for Tech Recruiting

Assessment software has come a long way from its humble beginnings. In education, these tools are breaking down geographical barriers, enabling remote testing…

Top Video Interview Softwares for Tech and Non-Tech Recruiting in 2024: A Comprehensive Review

With a globalized workforce and the rise of remote work models, video interviews enable efficient and flexible candidate screening and evaluation. Video interviews…

8 Top Tech Skills to Hire For in 2024

Hiring is hard — no doubt. Identifying the top technical skills that you should hire for is even harder. But we’ve got your…