It is the best choice so far for the player MAX. We want to get the highest possible value here.

Beta: It is the best choice so far for MIN, and it has to be the lowest possible value.

Note: Each node has to keep track of its alpha and beta values. Alpha can be updated only when it’s MAX’s turn and, similarly, beta can be updated only when it’s MIN’s chance.

Beta: It is the best choice so far for MIN, and it has to be the lowest possible value.

Note: Each node has to keep track of its alpha and beta values. Alpha can be updated only when it’s MAX’s turn and, similarly, beta can be updated only when it’s MIN’s chance.

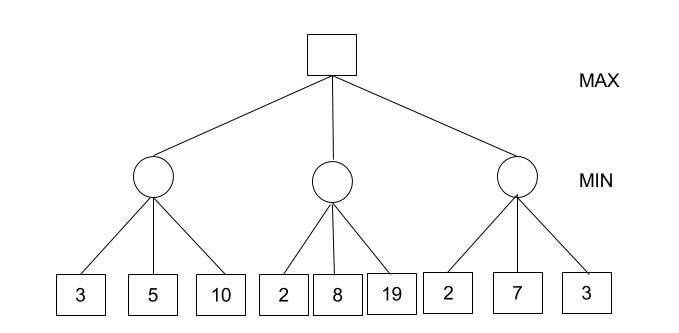

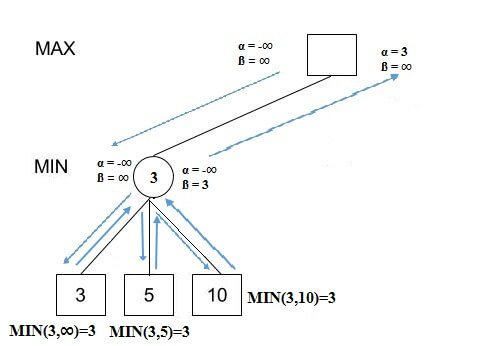

How does alpha-beta pruning work?

- Initialize alpha = -infinity and beta = infinity as the worst possible cases. The condition to prune a node is when alpha becomes greater than or equal to beta.

- Start with assigning the initial values of alpha and beta to root and since alpha is less than beta we don’t prune it.

- Carry these values of alpha and beta to the child node on the left. And now from the utility value of the terminal state, we will update the values of alpha and be, so we don’t have to update the value of beta. Again, we don’t prune because the condition remains the same. Similarly, the third child node also. And then backtracking to the root we set alpha=3 because that is the minimum value that alpha can have.

- Now, alpha=3 and beta=infinity at the root. So, we don’t prune. Carrying this to the center node, and calculating MIN{2, infinity}, we get alpha=3 and beta=2.

- Prune the second and third child nodes because alpha is now greater than beta.

- Alpha at the root remains 3 because it is greater than 2. Carrying this to the rightmost child node, evaluate MIN{infinity,2}=2. Update beta to 2 and alpha remains 3.

- Prune the second and third child nodes because alpha is now greater than beta.

- Hence, we get 3, 2, 2 at the left, center, and right MIN nodes, respectively. And calculating MAX{3,2,2}, we get 3. Therefore, without even looking at four leaves we could correctly find the minimax decision.

evaluate (node, alpha, beta)

if node is a leaf

return the utility value of node

if node is a minimizing node

for each child of node

beta = min (beta, evaluate (child, alpha, beta))

if beta <= alpha

return beta

return beta

if node is a maximizing node

for each child of node

alpha = max (alpha, evaluate (child, alpha, beta))

if beta <= alpha

return alpha

return alpha